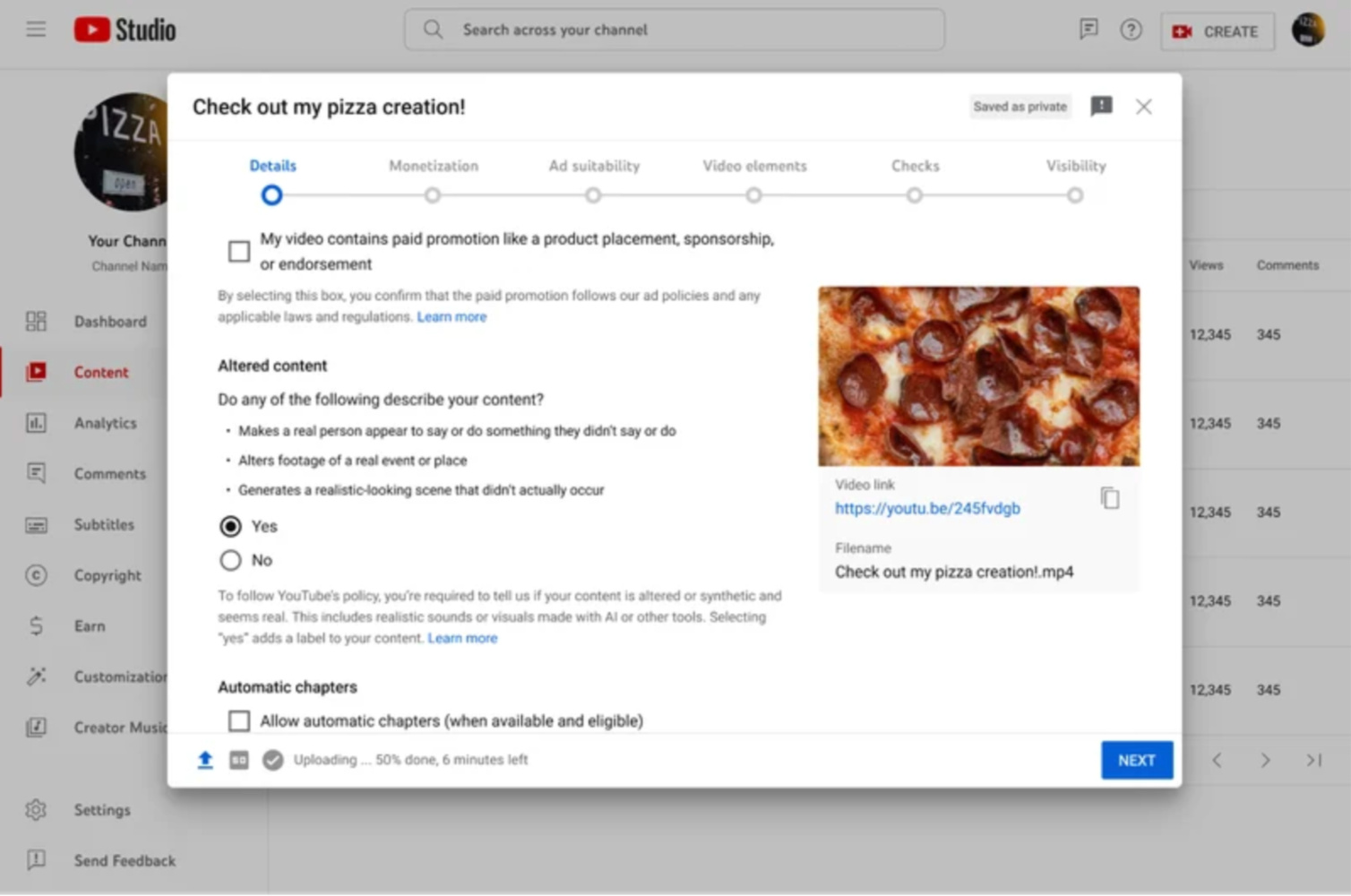

In a move to combat the spread of misinformation and deepfakes, YouTube is implementing a new policy that requires creators to disclose when their videos feature realistic content created or altered with artificial intelligence (AI). The platform is introducing a Creator Studio tool that makes it mandatory to reveal the use of such technologies, especially when there’s the potential to mislead viewers.

This new policy is a significant step towards greater transparency on YouTube. With the increasing sophistication of AI tools, it’s becoming easier to create fake but realistic-looking videos that could be used to deceive viewers. Deepfakes, in particular, have raised concerns about their potential to manipulate public opinion, especially during elections or other critical events.

What Needs to Be Disclosed

Creators must disclose videos that:

- Replace a person’s face with another or use AI-generated voices for narration.

- Alter footage of real events or locations in a misleading way.

- Depict realistic, fictional events that could be mistaken as real.

Exceptions to the Policy

YouTube doesn’t require disclosure for:

- Obviously fantastical content (e.g., unicorns, animated worlds).

- AI-assisted production tools (e.g., generated scripts, captions).

How Viewers Will See Disclosures

Most videos will have a label in the description, while content related to sensitive topics like health or news will display a prominent label on the video itself. The rollout begins with the mobile app and will expand to other platforms in the coming weeks.

Enforcement

YouTube intends to enforce this policy and will consider measures for creators who consistently avoid using the labels. In some cases, the platform may add labels automatically to ensure user awareness.