Google is expanding the capabilities of its AI Mode in Search with new multimodal features, allowing users to interact with both text and images for deeper, more flexible search results. The update lets users upload or take photos and then ask related questions, with the AI providing detailed responses, complete with links for further exploration.

Now rolling out to more users in the U.S. through Search Labs, the enhanced AI Mode builds on a custom version of Google’s Gemini 2.0 model. The feature supports complex queries and enables a more conversational search experience—going beyond traditional keyword input. It’s especially useful for open-ended questions, product comparisons, planning, and step-by-step guides.

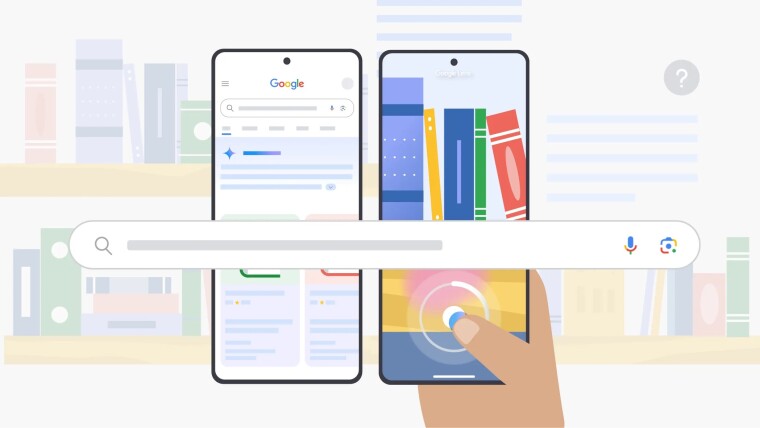

One of the major additions is its ability to understand images in context. According to Google, the AI can analyze entire scenes in a photo—including relationships between objects, materials, colors, shapes, and their arrangements. This allows for more meaningful visual searches. For example, snapping a picture of a cluttered bookshelf and asking for book recommendations can prompt the AI to recognize titles and suggest similar reads.

The feature operates through a dedicated AI tab in Google Search, positioned alongside tabs like All, News, and Images. It leverages a technique known as “query fan-out,” where multiple sub-queries are processed simultaneously across various data sources. The results are then merged into a cohesive, informative response.

Google notes that queries in AI Mode tend to be about twice as long as traditional searches. This suggests users are engaging more deeply with the tool—asking multi-part or follow-up questions to dive into topics. You might ask, “What’s the difference between sleep tracking features on a smart ring, smartwatch, and sleep mat?” and continue with, “How does heart rate vary during deep sleep?”

AI Mode’s multimodal update is available through the Google app on both Android and iOS. It works through the Google Lens feature, allowing users to start with an image and guide the AI through natural language prompts. While the feature was initially limited to Google One AI Premium users, it is now available to a broader group through Search Labs.

This development highlights Google’s push to turn Search into a more exploratory and AI-driven tool—especially at a time when users are looking for richer answers and more intuitive interaction with their devices.