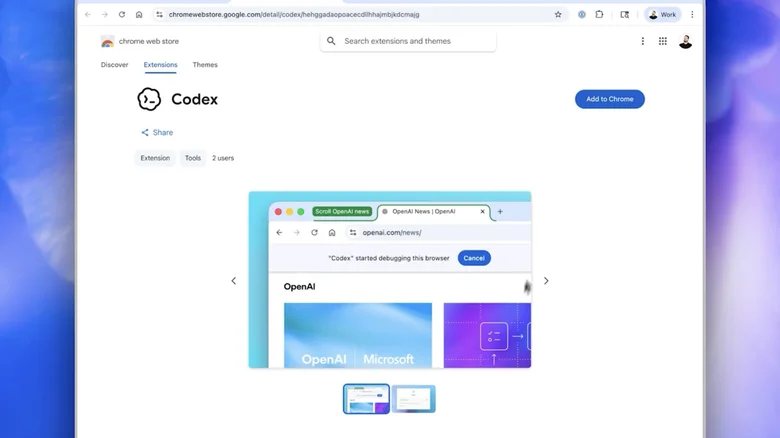

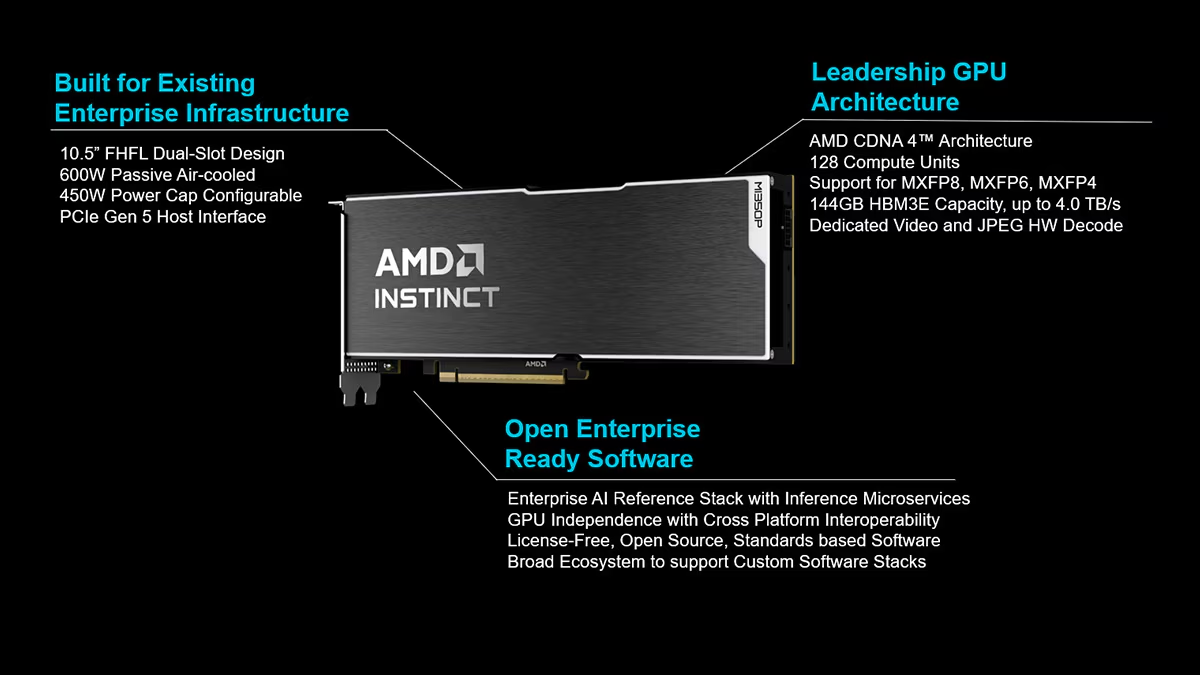

AMD has introduced the Instinct MI350P PCIe GPUs, a new line of dual-slot accelerator cards aimed at letting enterprises run AI inference workloads on their existing data center infrastructure rather than committing to major upgrades or relying solely on cloud services. Announced on May 7, 2026, the cards target organizations navigating the shift toward more advanced AI applications, including agentic systems, while avoiding the power, cooling, and rack redesigns often required by larger GPU platforms.

The core appeal lies in the form factor. These are standard air-cooled PCIe cards that slot into conventional servers supporting up to eight accelerators. For companies that need more compute than CPUs alone can deliver but aren’t prepared to overhaul their facilities, this offers a practical middle path. AMD positions the MI350P as suitable for inference and retrieval-augmented generation pipelines across small, medium, and large models. Early estimates suggest peak performance reaching 2,299 teraflops, scaling to 4,600 TFLOPS at MXFP4 precision, alongside 144 GB of HBM3E memory with up to 4 TB/s bandwidth. These figures come with the usual caveats—they are preliminary projections as of April 2026 and subject to change.

Support for lower-precision formats such as MXFP6, MXFP4, FP8, and sparsity acceleration for 8- and 16-bit operations should help deliver higher throughput while keeping power and cooling demands manageable within standard air-cooled environments. This matters because many data centers still operate under legacy constraints where liquid cooling or high-density power delivery remains expensive or logistically difficult. By focusing on these efficiencies, AMD is addressing a real friction point in enterprise AI adoption: the gap between experimental pilots and production-scale deployment.

On the software side, the cards emphasize openness. AMD provides an enterprise AI reference stack at no licensing cost, with integration for Kubernetes GPU Operator, cloud-native inference microservices, and frameworks like PyTorch. The idea is to enable workload migration with minimal code changes and avoid ongoing per-token cloud fees. This open-ecosystem approach contrasts with more proprietary alternatives in the market and could appeal to organizations wary of vendor lock-in, though real-world interoperability always depends on partner implementations and ongoing driver maturity.

Critically, these PCIe cards are not meant to replace high-end dedicated GPU platforms for the most demanding training runs; they serve as an accessible entry and scaling option for inference-heavy use cases. In that sense, they reflect a broader industry trend toward modular, infrastructure-friendly AI hardware as the technology moves beyond hyperscale data centers into mainstream enterprise settings. Whether the claimed performance and cost efficiencies hold up under sustained production loads will determine their impact.

Overall, the MI350P represents AMD’s continued push to broaden AI accessibility without forcing wholesale infrastructure replacement. For IT leaders balancing privacy concerns, cost predictability, and the need for on-premises control, it adds a credible option to the mix.