OpenAI has introduced a new safety feature for ChatGPT called Trusted Contact, allowing users to designate one person who can be notified during conversations flagged for potential self-harm risks. The rollout comes amid ongoing scrutiny of how AI chatbots handle mental health discussions, where the line between helpful conversation and unintended harm has proven difficult to manage consistently.

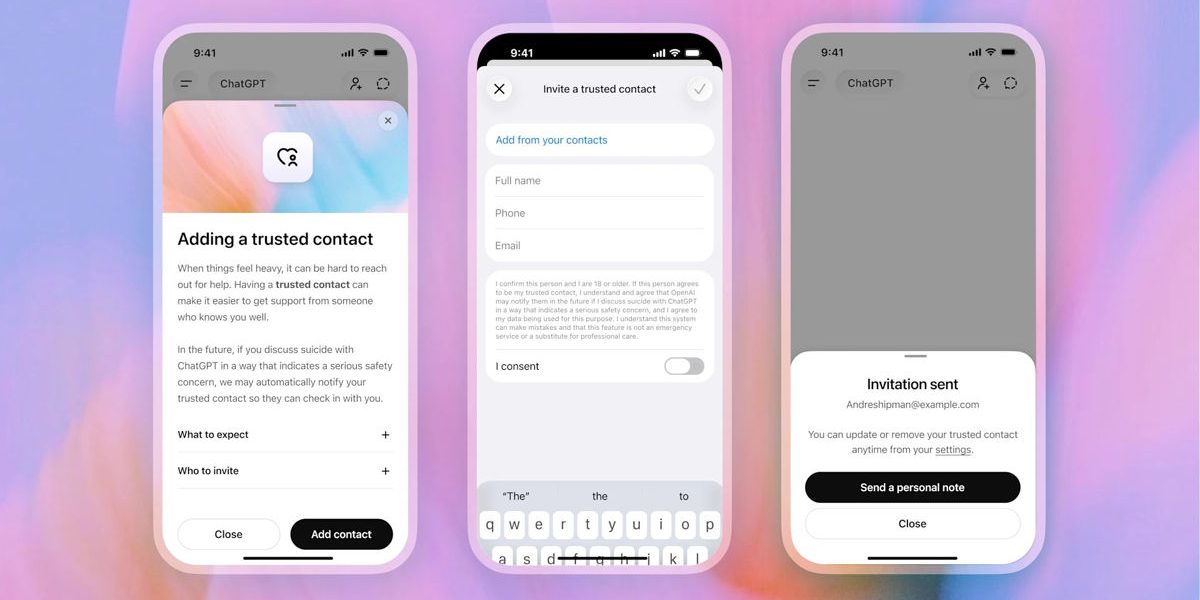

The system works on an opt-in basis for personal ChatGPT accounts only. Users select a trusted friend, family member, or caregiver through the settings and send an invitation. That contact has one week to accept. If the platform’s automated detection flags a conversation, a small team of human reviewers steps in, aiming to assess the situation within an hour. Should reviewers confirm a genuine concern, the trusted contact receives a brief notification via email, text, or in-app message encouraging them to check in. Importantly, OpenAI does not share any conversation transcripts or details with the contact.

This remains limited to one adult per account and excludes business, enterprise, and education plans. The company developed the feature in consultation with the American Psychological Association and its own Expert Council on Well-Being and AI. OpenAI has cited internal data showing that roughly 0.15 percent of weekly active users engage in conversations with explicit indicators of potential self-harm. The move follows reports, including a 2025 BBC investigation, that highlighted instances where ChatGPT provided responses that could exacerbate vulnerable situations, especially in extended exchanges where safety guardrails sometimes weaken.

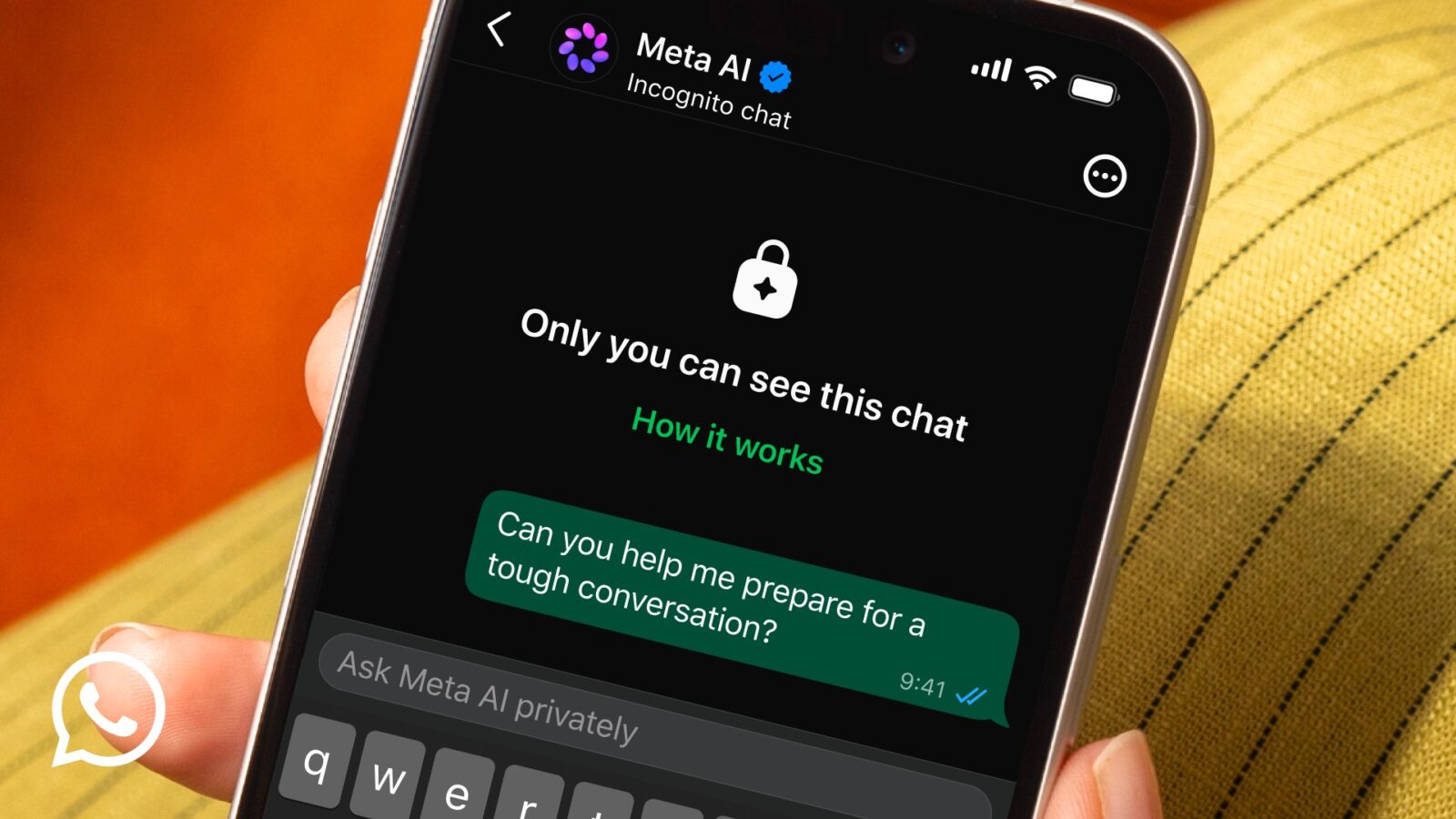

For many, the appeal is clear. Millions now turn to AI for deeply personal matters they hesitate to discuss with others, creating a new kind of digital confidant. In that context, a safety net that bridges the gap back to real human connections addresses a legitimate gap. Yet the feature also highlights inherent tensions. Users often choose AI precisely for its privacy and lack of judgment. Introducing any form of external notification, however carefully designed, risks eroding that sense of safety for those who need it most. Not every crisis will be detected, and false positives or delayed reviews could create their own complications.

The broader challenge reflects the maturing reality of AI companions. What started as conversational tools have quietly become outlets for emotional support, crisis navigation, and daily introspection. OpenAI’s incremental safety improvements show recognition of this shift, but they also underscore the limits of current technology. Automated detection remains imperfect, human review capacity is finite, and the ethical balance between intervention and autonomy continues to evolve.

Trusted Contact represents a pragmatic step rather than a complete solution. It acknowledges that AI systems now occupy intimate spaces in people’s lives, spaces where errors carry real human consequences. Whether it proves effective will depend on careful implementation, transparent communication with users, and ongoing adjustments as both technology and societal expectations change. For now, it offers an optional bridge for those who want one, while leaving the fundamental privacy trade-off in the hands of individual users.