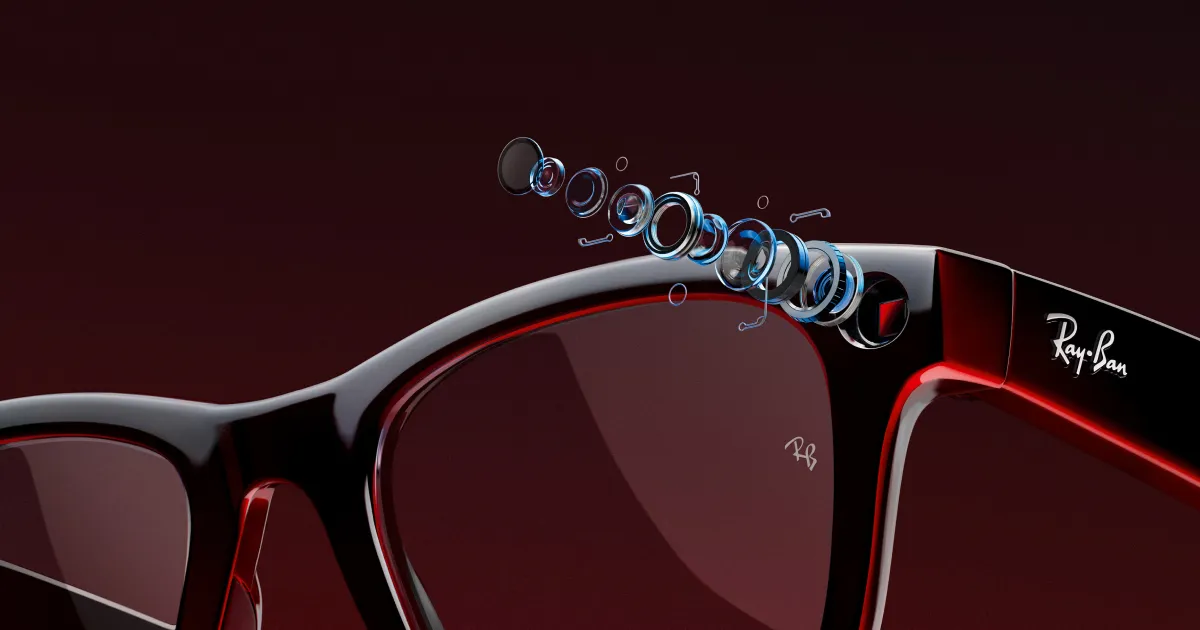

Meta is continuing its push to make smart glasses feel less like experimental gadgets and more like practical everyday devices. The latest software update for the company’s Ray-Ban smart glasses introduces a feature called “neural handwriting,” allowing users to write messages in the air using hand movements detected through an EMG wristband accessory. At the same time, Meta is expanding third-party app support with integrations for services including Spotify, Audible, Disney+, and ESPN.

The neural handwriting system works by reading electrical muscle signals in the user’s forearm through an electromyography (EMG) wristband. Instead of tapping controls on the glasses or relying entirely on voice commands, users can draw letters in the air with subtle hand motions. The software interprets those gestures as digital text for searches, messaging, and other interactions.

It sounds futuristic in the way wearable tech presentations often do, but the concept itself is not entirely new. Gesture-based computing has been circulating through research labs and prototype demos for years, from Microsoft Kinect experiments to various augmented reality interfaces that never fully entered the mainstream. What makes Meta’s implementation notable is less the novelty of air-writing itself and more the fact that the company is attempting to ship it as a real consumer-facing feature rather than a controlled demo.

The practical value will likely depend on how accurate and frictionless the experience actually feels in daily use. Writing in the air may sound convenient, but human communication habits tend to favor the fastest and least awkward method available. Voice controls already struggle in noisy spaces or public settings where users do not want to speak commands aloud. Meta appears to position neural handwriting as a quieter alternative for those environments.

The update also broadens the glasses’ usefulness beyond photography and calls. Support for Spotify, Audible, Disney+, and ESPN allows users to control media playback, continue audiobooks, check sports updates, or browse entertainment content directly through the glasses interface. That kind of ecosystem expansion matters more than many flashy hardware demos because wearable devices historically fail when they lack practical software integration.

Meta’s larger strategy is becoming increasingly clear. The company is trying to establish an ecosystem around lightweight smart glasses before competitors like Apple fully enter the category. Recent wearable products have gradually shifted away from the fully immersive augmented reality ambitions that dominated tech presentations a few years ago. Instead, companies are focusing on simpler utility-first designs that blend cameras, AI assistants, audio features, and contextual computing into familiar eyewear formats.

There are still obvious limitations. The EMG wristband required for neural handwriting is a separate accessory, and Meta has not announced pricing or regional availability. The social awkwardness factor also remains difficult to ignore. Air-writing invisible text in public may ultimately feel no less strange than talking to voice assistants in crowded spaces did a decade ago.

Still, Meta’s latest update reflects a broader industry shift toward wearable devices that prioritize continuous low-friction interaction instead of dramatic AR experiences. Whether consumers fully embrace that future remains uncertain, but smart glasses are slowly evolving from novelty hardware into products attempting to solve practical daily tasks.