Meta has introduced a new AI video editing tool that pushes the boundaries of what users can do with short-form content. Built on its Movie Gen research platform, the editor allows users to radically transform videos using a wide range of stylistic effects—no professional skills required. The release marks one of the first public-facing tools to emerge from Meta’s recent AI video research, and while it’s still early days, the results hint at a future where high-end video effects become as commonplace as photo filters.

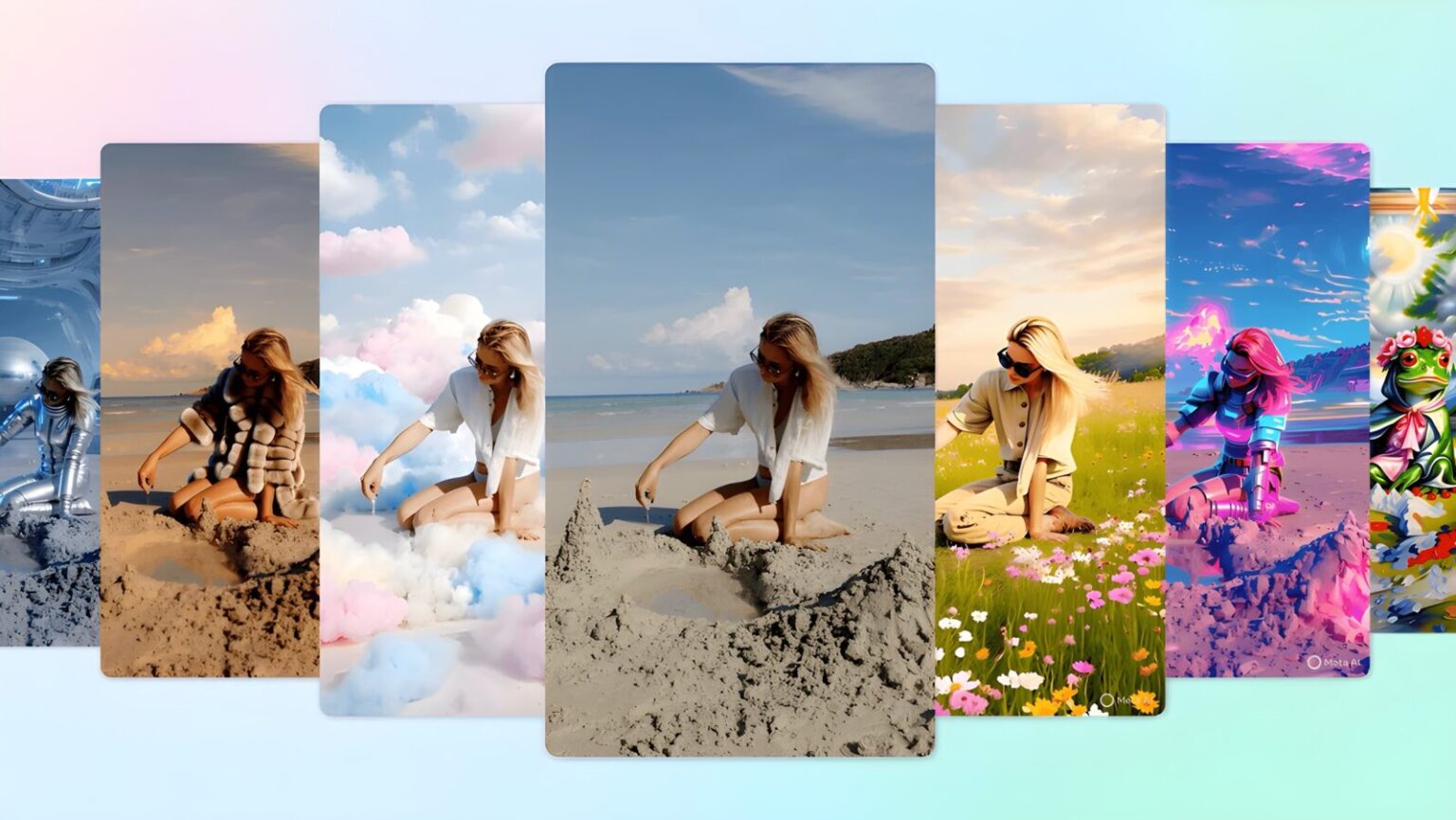

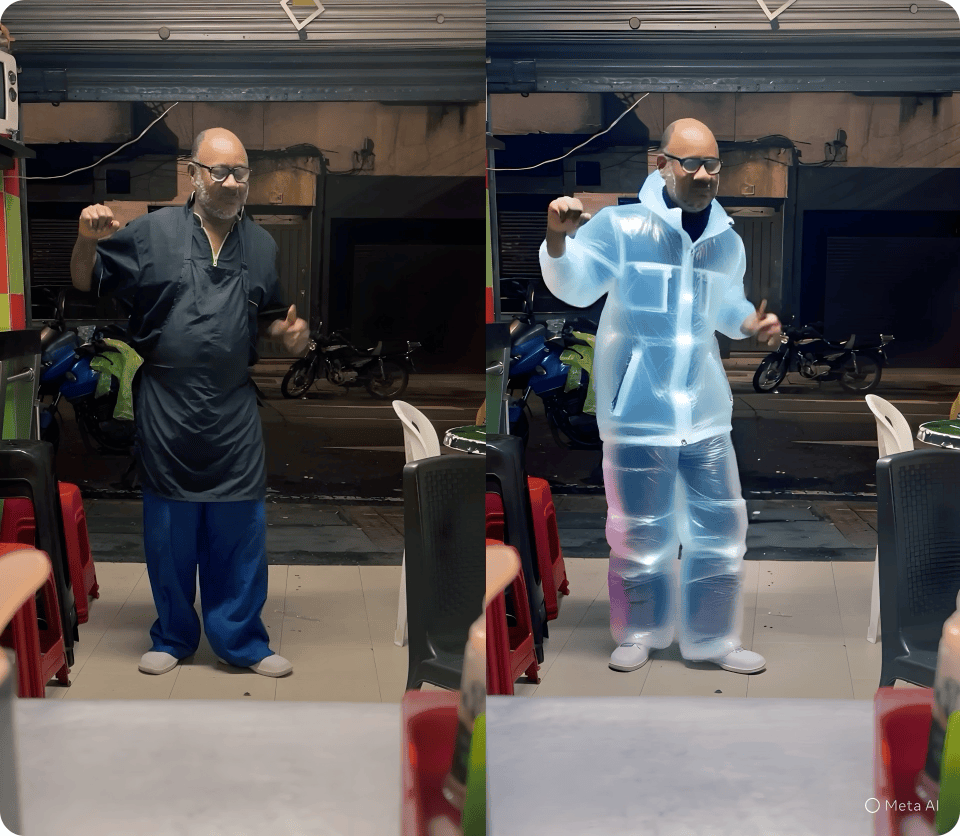

Currently, the tool offers more than 50 preset prompts, enabling users to reimagine their footage in visual styles that range from the whimsical to the hyperreal. A simple clip of someone dancing can be altered to appear as if they’re performing in a misty dreamscape or rendered as a marble statue. Other effects include transporting subjects to beaches or snowy forests, layering in surreal lighting, or altering wardrobe details—like changing a jacket into a translucent puffer suit. The interface emphasizes ease of use: pick a style, apply it, and the AI handles the rest.

What users can’t do—yet—is enter custom text prompts. That feature is on the roadmap for later this year, which could further unlock the tool’s potential for more tailored creative outputs. But even with its current limitations, the visual transformations are advanced enough to feel almost cinematic in their polish.

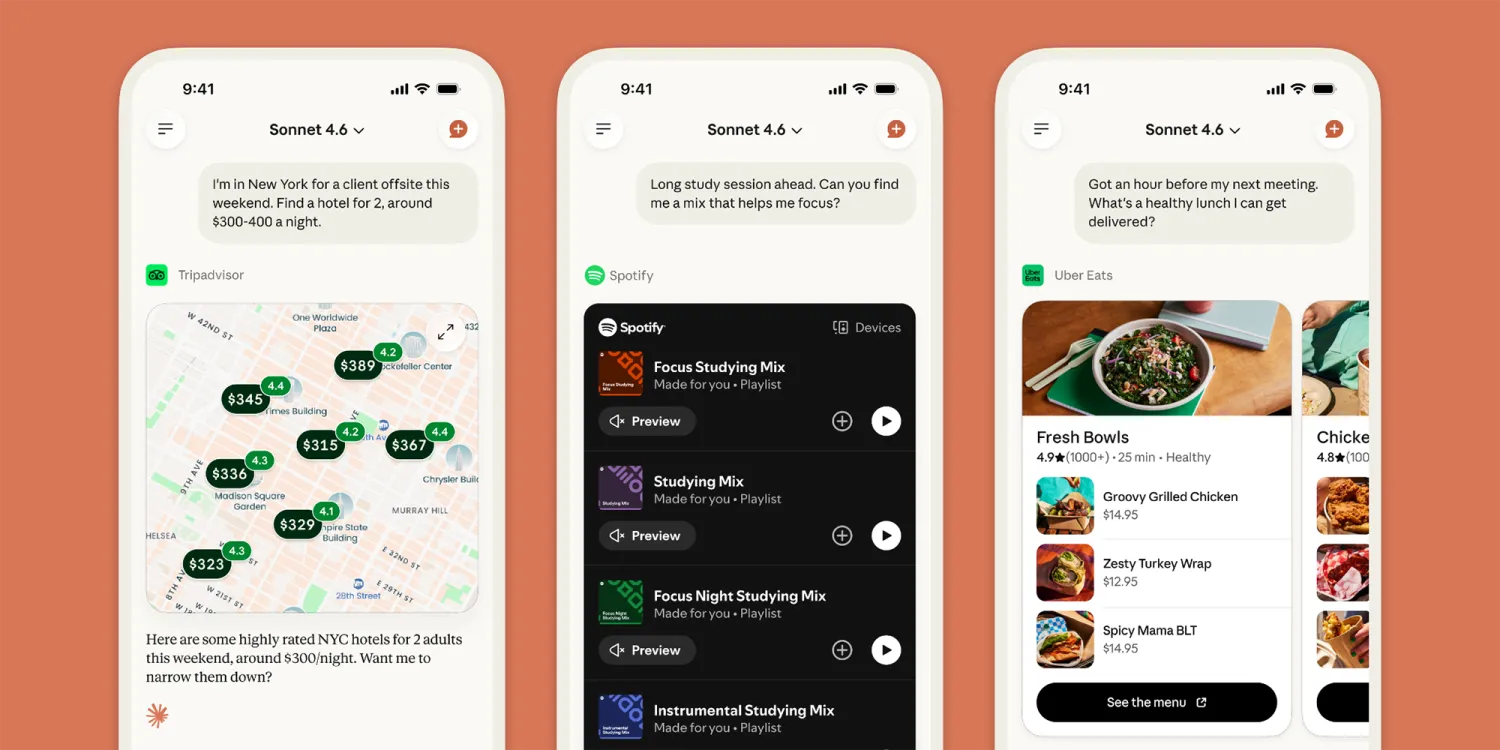

The editor is available now through the Meta AI app, the Edits app, and on the Meta.AI website. Instagram integration is planned but will roll out gradually. Adam Mosseri, head of Instagram, has publicly teased the feature for some time, and his recent demo showcased just how radical these effects can look in everyday videos.

Although the editor builds on technology capable of generating content entirely from scratch—including video synthesis and photo-to-video transformations—this version is focused strictly on editing existing footage. That decision likely reflects Meta’s cautious approach to releasing generative tools in consumer products, where the potential for misuse remains high.

Still, Meta’s move to bring Movie Gen-based editing tools to the public signals growing confidence in its AI capabilities. And as competitors like OpenAI, Google, and Runway race to develop similar tools, Meta is trying to differentiate by anchoring these features inside its widely used platforms. By embedding generative video features directly into apps like Instagram, Meta could redefine how users think about short video—not as something recorded, but as something co-created with AI.

Whether this tool becomes another fleeting novelty or a staple in digital storytelling remains to be seen. But in its current form, Meta’s AI editor offers a glimpse into a future where video creation is no longer constrained by what’s captured on camera, but by what users can imagine—and what a model can render in seconds.