Meta is rolling out expanded AI-powered age assurance measures aimed at tightening controls on underage users across its social platforms. The initiative combines machine learning analysis, default settings for younger accounts, and additional family tools in response to longstanding concerns about teen safety on social media.

The core focus remains enforcing the minimum age requirement of 13 for services including Instagram, Facebook, and Messenger. Meta’s systems now draw on a broader set of signals—posts, comments, bios, and captions—to detect contextual clues such as school references or age-specific milestones. This contextual review is being extended to more areas within the apps. Separately, new visual analysis tools examine general characteristics in photos and videos to estimate broad age ranges without relying on facial recognition or individual identification. When paired with behavioural and textual data, these methods reportedly improve detection rates, though the company has not released independent performance metrics.

Accounts flagged as potentially underage face verification requests. Those that cannot confirm eligibility may be removed. Reporting flows have been simplified both inside the apps and through the help centre, while AI-assisted review processes aim to standardise and accelerate human moderation. Additional safeguards target users who repeatedly create new accounts to evade restrictions. Many of these features already operate globally, with further markets receiving them in stages.

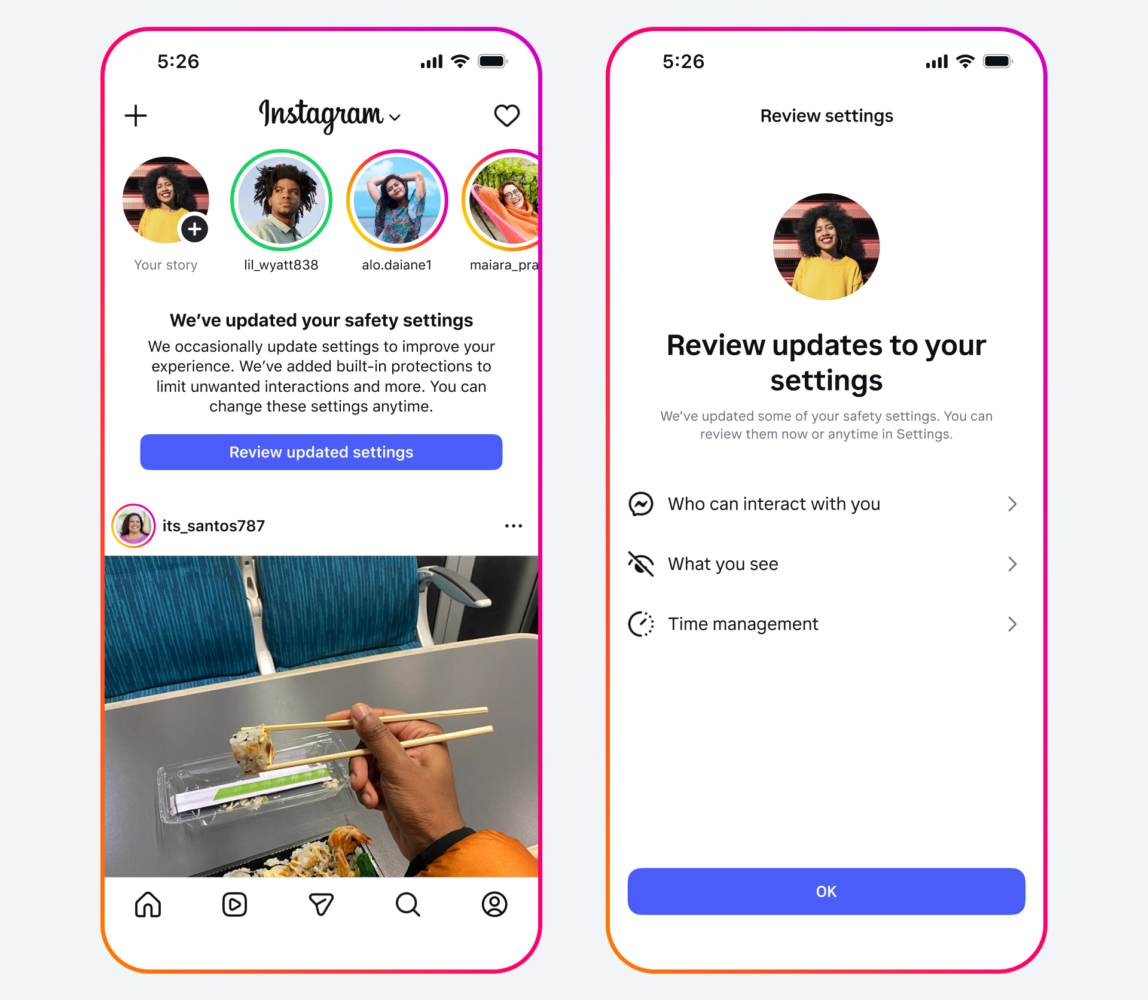

On the protection side, Meta’s Teen Account system automatically applies stricter defaults for users under 18, including limits on who can contact them and a 13+ content filter intended to reduce exposure to sensitive material. Hundreds of millions of accounts have been placed under these settings since launch. The company is also widening proactive detection that can re-categorise accounts even when an adult birthdate was provided, shifting them into age-appropriate experiences. This capability, already active in selected regions, will reach more countries over time.

Parental involvement receives attention through new notifications that encourage age verification and open discussion about accurate information sharing. These build on the existing Family Center, which offers monitoring tools and guidance. Age changes that appear suspicious still trigger ID or facial age estimation checks.

Meta has long argued that age assurance presents an industry-wide problem best addressed at the operating system or app-store level rather than by individual platforms. Such an approach, it suggests, could deliver more consistent protections while raising fewer privacy issues than fragmented app-by-app solutions. In practice, the company continues to rely on activity signals and user reports alongside its AI tools.

Critics may note that these measures arrive after years of regulatory pressure and public scrutiny over teen mental health impacts and data practices on social platforms. Past enforcement has sometimes proven patchy, with determined users finding workarounds. While the technical investments reflect genuine operational challenges in large-scale moderation, questions remain about long-term effectiveness and the balance between safety and user privacy. Meaningful progress will likely depend on transparent results, external audits, and whether broader ecosystem coordination materialises beyond individual company efforts.