Adobe is rolling out a new Firefly AI assistant that aims to streamline tasks across its Creative Cloud suite by coordinating actions between familiar tools like Photoshop, Premiere, Lightroom, and others.

The product, which began as a preview called Project Moonlight last October, enters public beta in the coming weeks. Details on pricing remain unclear, though it is expected to fit within Firefly’s existing credit-based model. At its core, the assistant lets users describe a desired outcome in plain language, then handles the underlying steps—switching between apps, applying edits, and managing workflows—while still allowing manual intervention at any stage.

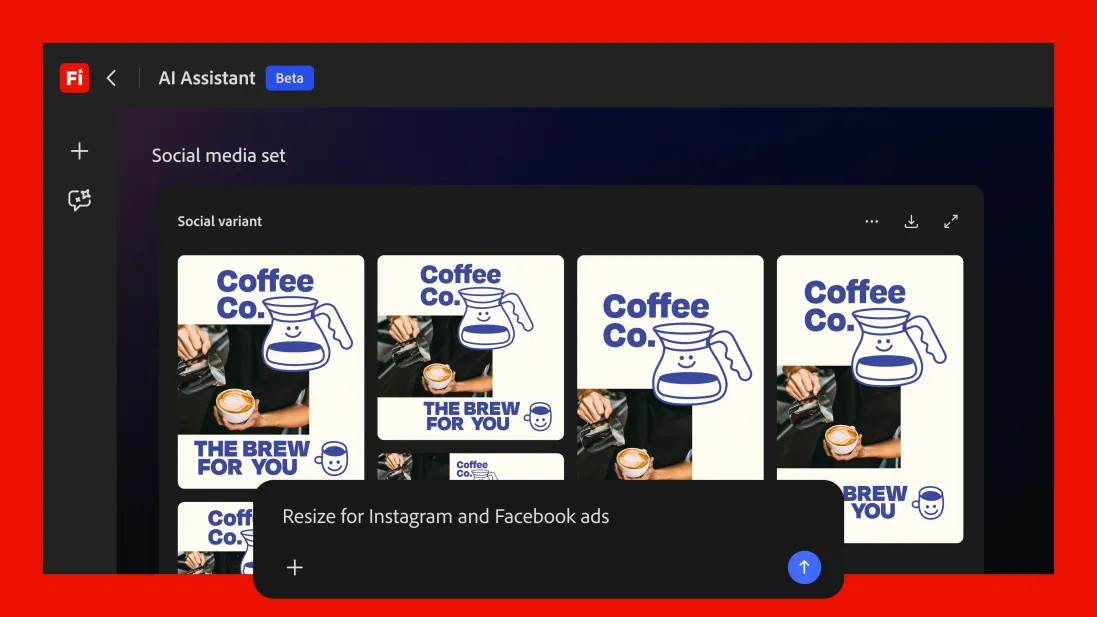

Controls appear as text prompts, buttons, or sliders depending on the context. For example, while editing a product photo placed in a forest scene, the assistant might surface a simple slider to adjust the density of trees and foliage. Over time, Adobe claims it will learn individual creative preferences and surface more relevant suggestions. The company is also introducing pre-built “skills,” such as a social media assets workflow that automatically crops or expands images for different platforms, optimizes file sizes, and organizes the results.

This move continues Adobe’s measured push into AI-assisted creative work. The company has already introduced dedicated assistants for Photoshop, Express, and Acrobat, and on Wednesday indicated it is exploring better integration with third-party large language models. Competitors including Canva and Figma have been developing similar agentic features, yet Adobe positions its approach around the depth of its established toolset rather than starting from scratch.

Critics might note that unifying a sprawling catalog of professional software has long been a pain point for users, especially those who only need occasional access to advanced features. By attempting to hide some of that complexity behind natural-language commands, Adobe is addressing a genuine friction in its ecosystem, though the real test will be how reliably the assistant executes multi-step tasks without introducing new errors or unexpected results. Past AI features in creative tools have sometimes delivered impressive demos while falling short in production environments where precision matters most.

On the Firefly side, Adobe is adding practical video enhancements: noise reduction for speech, reverb and music adjustments, a color correction tool, and tighter integration with its stock library. The service is also incorporating Kling 3.0 and Kling 3.0 Omni models into its roster of third-party AI options, giving users more choices without forcing them to leave the platform.

In a market where creative software has grown increasingly complex and subscription-heavy, tools like the Firefly AI assistant reflect a broader industry attempt to make professional-grade capabilities feel more approachable. Whether it genuinely reduces the learning curve or simply layers another AI interface on top of existing complexity remains to be seen once the beta is in users’ hands. For now, it signals Adobe’s ongoing effort to evolve its flagship applications in response to rapid advances in generative AI, even as questions linger around reliability, cost transparency, and long-term impact on creative workflows.