Meta is expanding its use of artificial intelligence across Facebook and Instagram, focusing on two areas that have long drawn user complaints: customer support and content moderation. The company says these updates are intended to make its platforms easier to navigate while improving how harmful or misleading content is handled at scale.

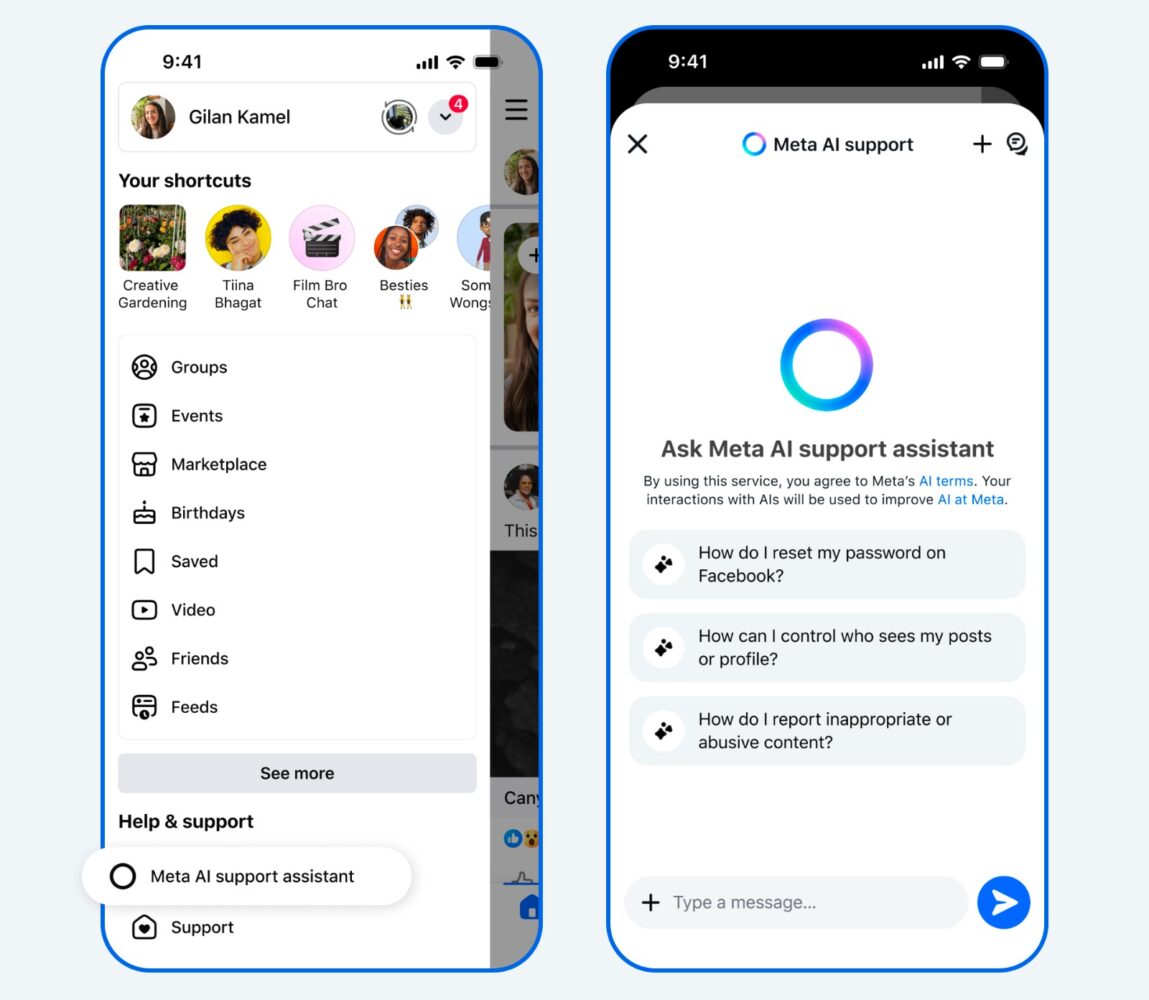

A central part of this rollout is the Meta AI support assistant, now being introduced globally across both mobile apps and desktop environments where Meta AI is available. The tool is designed to replace or at least reduce reliance on traditional help center searches by offering direct, automated assistance for common account-related issues. These include password resets, privacy adjustments, reporting scams or impersonation, and understanding why content may have been removed.

The assistant operates within the apps themselves, aiming to streamline what has historically been a fragmented and often slow support experience. Meta claims that responses typically arrive within seconds, which, if consistent, would mark a noticeable shift from the delays users have often encountered. The system can also take limited actions on behalf of users, though the scope of those actions appears to be expanding gradually rather than fully implemented at launch.

Alongside support improvements, Meta is continuing to invest in AI systems for content enforcement. These systems are being tested to identify and address issues such as scams, impersonation, and illegal activity more efficiently. According to the company, early results suggest that AI models can detect certain types of violations at a higher rate than human reviewers alone, while also reducing error rates in some categories. For example, the systems have reportedly flagged thousands of scam attempts per day that had previously gone unnoticed and reduced reports of celebrity impersonation significantly.

There are also claims of improved detection of more nuanced threats, such as account takeovers or fraudulent websites mimicking legitimate brands. These systems analyze patterns that might not be obvious in isolation, such as unusual login behavior combined with profile changes. Meta also highlights expanded language coverage, with AI tools now capable of operating across languages used by the vast majority of internet users, including adapting to regional slang and evolving online behavior.

Despite the increased reliance on automation, the company maintains that human oversight will remain part of the process, particularly for high-risk decisions like account bans or legal escalations. The broader plan suggests a gradual shift away from third-party moderation toward more internally managed systems supported by AI.

While these developments point to a more automated infrastructure, their effectiveness will depend on consistency and transparency, especially given ongoing concerns around moderation accuracy and user trust. Meta’s approach indicates a long-term transition rather than an immediate overhaul, with further iterations expected as these systems are tested and refined.