Google is repositioning Stitch as a broader design environment shaped around generative AI, aiming to simplify how interfaces move from idea to prototype. The update introduces what the company refers to as “vibe design,” a workflow that shifts the starting point away from structured wireframes and toward loosely defined prompts, intentions, and references.

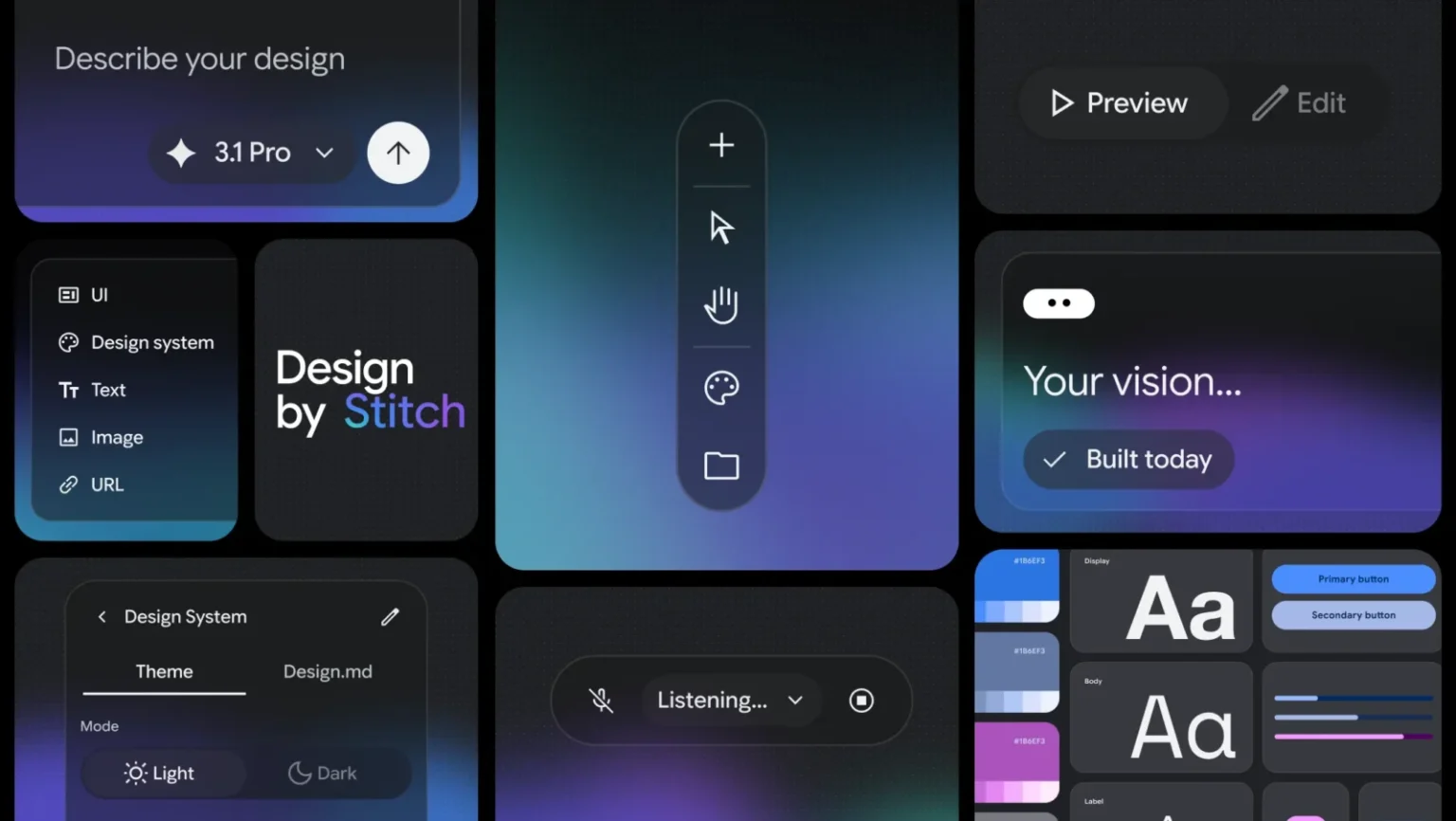

At its core, Stitch now functions as an AI-driven design canvas where users can generate interface concepts using natural language. Instead of mapping layouts manually, users describe goals, user experience intentions, or visual inspirations, and the system produces interface options that can be refined in place. This approach reflects a wider trend in design tools, where early-stage ideation is increasingly handled through generative systems rather than traditional drafting methods.

A central part of the update is a redesigned, infinite canvas that supports multiple input types, including text, images, and code. The idea is to allow fragmented inputs to coexist in one workspace, gradually evolving into structured interface designs. While this may reduce friction in early ideation, it also shifts more decision-making to the system, which may not always align with established design standards or constraints without careful oversight.

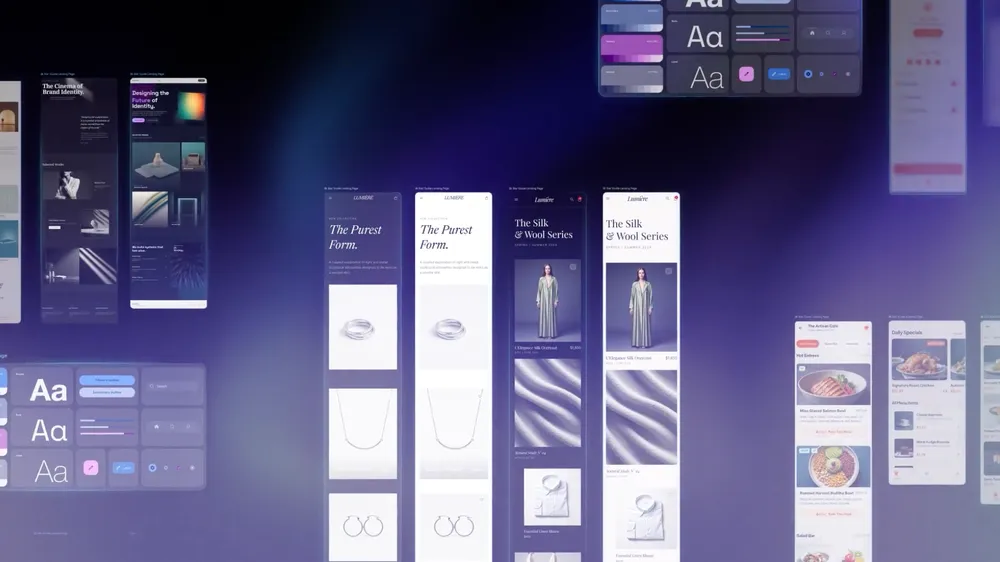

The platform also introduces a design agent that operates across the entire project. This agent is intended to track changes, suggest iterations, and help manage multiple design directions simultaneously. Alongside it, an “agent manager” feature organizes parallel explorations, which could be useful for teams comparing variations, though it adds another layer of abstraction that may take time to integrate into existing workflows.

Another addition is DESIGN.md, a portable format for design systems. Users can extract design rules from existing websites or reuse system guidelines across projects. This attempts to address a common issue in design consistency, though its effectiveness will depend on how accurately the system interprets and applies those rules.

Prototyping is positioned as near-instant. Stitch can connect generated screens into interactive flows and simulate user journeys with minimal manual setup. The system also predicts subsequent screens based on user interactions, which may speed up iteration but could introduce assumptions that require manual correction.

Voice input has also been added, allowing users to modify designs conversationally. The system can respond with critiques, generate variations, or adjust layouts in real time. While this may streamline certain tasks, its practical value will likely depend on how precise and context-aware those interactions prove to be in real-world use.

Finally, Stitch is being integrated into broader development workflows through export tools and APIs, including compatibility with platforms like AI Studio. This positions it less as a standalone design tool and more as a bridge between concept, design, and development.

Overall, Stitch reflects a continued shift toward AI-assisted software creation, where the emphasis is on speed and accessibility. While these tools can reduce the barrier to entry and accelerate early exploration, they also raise questions about design quality, authorship, and the balance between automation and human control. As with similar platforms, its long-term value will depend on how well it supports refinement and precision, not just rapid generation.