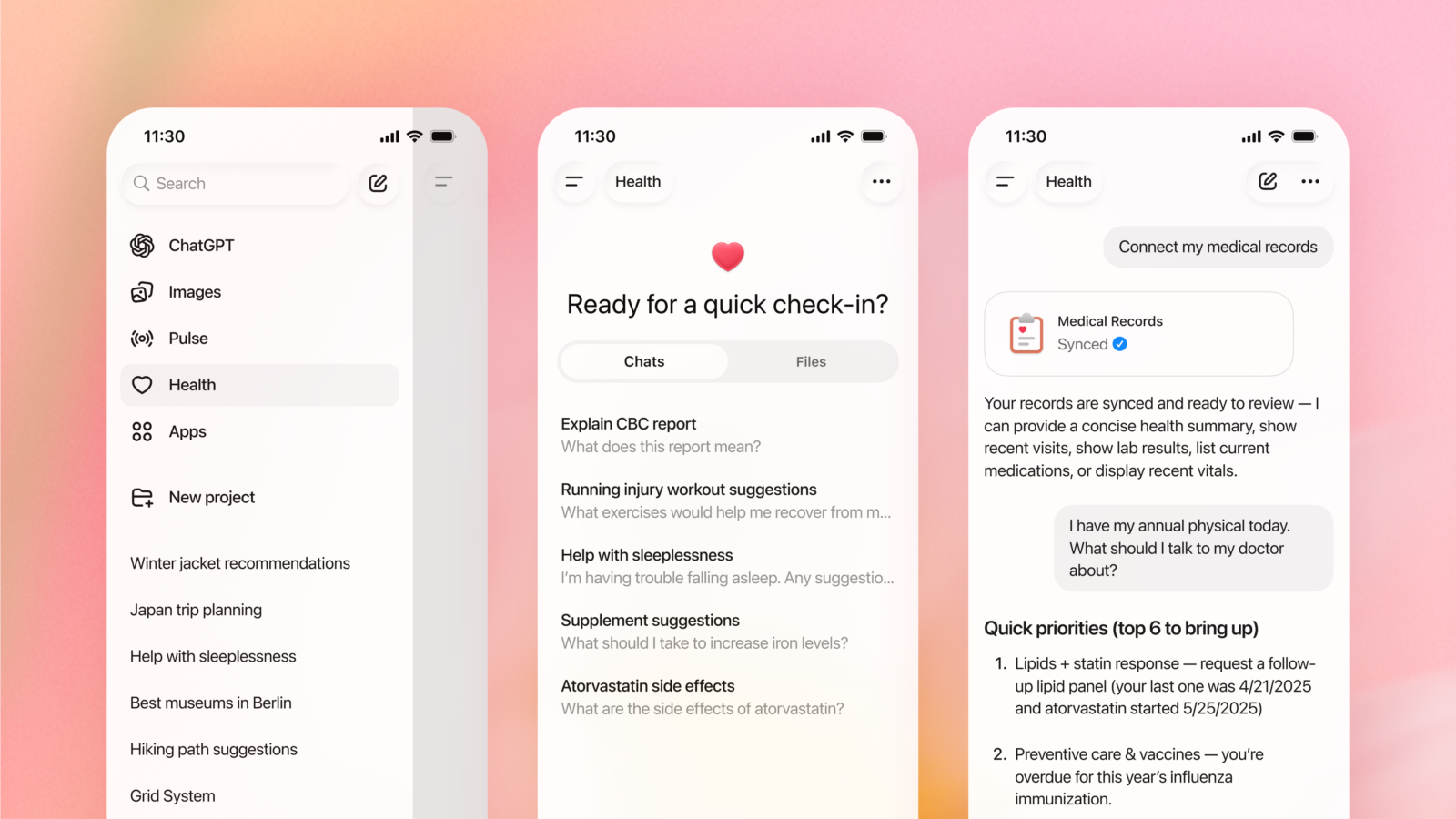

OpenAI has introduced a new health-focused experience inside ChatGPT, reflecting how frequently people already turn to AI for wellness and medical-related questions. The feature, called ChatGPT Health, appears as a separate, sandboxed tab within the ChatGPT interface and is designed to keep health conversations isolated from a user’s general chat history.

According to OpenAI, hundreds of millions of users ask health-related questions each week, ranging from symptom explanations to fitness and nutrition guidance. ChatGPT Health is positioned as a response to that demand, offering a more personalized environment where users can ask questions about their health without mixing that information into other conversations or memories.

One of the most notable aspects of ChatGPT Health is its ability to connect with external health and wellness platforms. Users can choose to link personal data from services such as Apple Health, Peloton, MyFitnessPal, and Weight Watchers. Once connected, the AI can tailor its responses using that information, potentially making guidance around diet, exercise, and lifestyle more relevant to the individual. OpenAI says data shared through ChatGPT Health will not be used to train its models.

The company is careful to frame expectations. In its announcement, OpenAI stresses that ChatGPT Health is not intended for diagnosis or treatment. Instead, it is positioned as a support tool that can help users understand test results, prepare questions ahead of a doctor’s appointment, or make sense of general health information. The feature is currently in a testing phase, with access available via a waitlist. A broader rollout is planned across all subscription tiers, although some app integrations may be limited by region.

That cautionary language, however, does not fully address ongoing concerns about how people actually use AI tools. While OpenAI can label the feature as informational only, it has little control over whether users rely on it for decisions that should involve medical professionals. Chatbots are still prone to errors and fabricated information, particularly in complex or ambiguous scenarios, and there have already been documented cases of users following incorrect advice generated by AI systems.

Another area that remains largely unaddressed in the announcement is mental health. Chatbots, including ChatGPT, are increasingly used as informal substitutes for therapy, in part because of accessibility and cost. This trend has drawn scrutiny following reports of users experiencing harm after relying too heavily on AI conversations for emotional support. ChatGPT Health does not appear to introduce new safeguards specific to those risks.

The idea of a constantly available assistant that can help interpret health data is undeniably appealing, especially for people navigating complex medical systems or unfamiliar terminology. At the same time, the launch highlights an uncomfortable tension: AI is becoming more capable and more integrated into sensitive areas of life faster than trust and oversight can realistically keep up.

For now, ChatGPT Health sits in an in-between space. It may be useful for organization, clarification, and preparation, but it is not a replacement for trained professionals. As with many AI tools, its value will depend less on what it can technically do and more on how cautiously users choose to rely on it.