dobe has introduced Firefly Image 5, the latest version of its AI image generation model, expanding the tool’s creative range and technical precision. The update brings major upgrades such as native 4-megapixel resolution, support for layered editing, and the ability for artists to train custom models based on their own work — positioning Firefly as one of Adobe’s most creator-centric AI releases yet.

According to Adobe, Firefly Image 5 produces images at native 4MP quality, a fourfold improvement over the previous model, which relied on upscaling from 1MP outputs. The new model also delivers more realistic human rendering, improved lighting accuracy, and better handling of complex compositions — long-standing challenges for AI image tools.

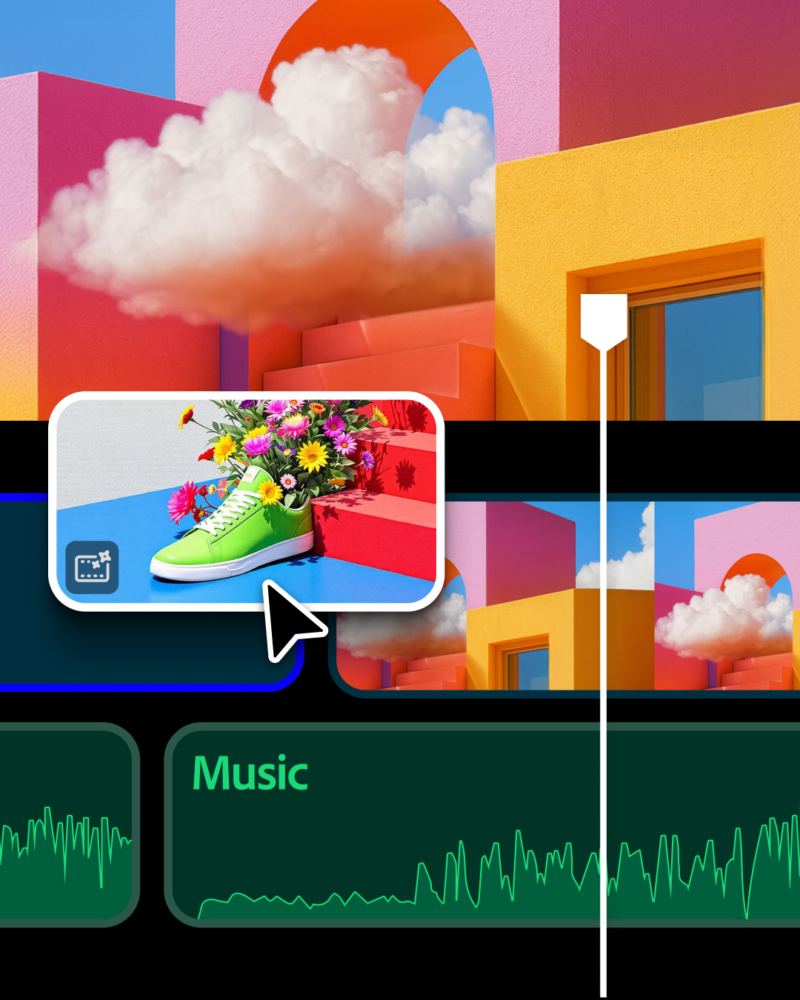

Perhaps the most significant feature is Firefly’s new layer-based editing. The system now treats each object in a composition as an editable layer, allowing users to manipulate specific elements with simple prompts or standard tools like resize and rotate. Adobe says the layer functionality preserves detail and image consistency, giving users more precise creative control without sacrificing fidelity.

The update also expands Firefly’s ecosystem. The platform has supported third-party AI models from partners such as OpenAI, Google, Runway, Topaz, and Flu; now, Adobe is introducing a closed beta that lets users build their own personalized models. Artists can upload images, illustrations, or sketches to train a Firefly model that reflects their individual style, enabling them to generate new works in their own aesthetic language.

Firefly’s web interface has also been redesigned to streamline workflow. Users can now toggle between image and video generation directly from the main prompt bar, select preferred AI models, and modify aspect ratios. The homepage includes shortcuts to other Adobe apps and displays recent generations and saved files for easier project management.

On the video side, Firefly’s generation and editing tool now supports layers and a timeline-based interface — a design currently in private beta. This structure mirrors traditional editing software but leverages AI to automate sequencing and adjustment tasks.

Firefly is also expanding into sound. New audio-generation features let users create entire soundtracks or voiceovers via text prompts, powered by ElevenLabs’ models. Another addition, a keyword-driven “word cloud” interface, simplifies the process of writing creative prompts for image, video, or audio generation.

With these updates, Adobe continues to integrate generative AI deeper into its ecosystem while focusing on creative ownership — giving artists not just new tools, but the means to shape AI around their own visual identity.